Given the very big bias in favor of "one should always use hash functions because they are very good!" I read through some SO questions on hash quality e.g. This seams to be a lot faster then to update one or more complicated slow hash algorithms with these 100 files. Why can't I just read a block from each same-size file and compare it in memory? If I have to compare 100 files I open 100 file handles and read a block from each in parallel and then do the comparison in memory. Using strong hashes, you would only need to hash each of them once, giving you n hashes in total." is skewed in favor of hashes and wrong (IMHO) too. "Assuming that many (say n) files have the same size, to find which are duplicates, you would need to make n * (n-1) / 2 comparisons to test them pair-wise all against each other. "Hashes are better or faster because you can reuse them later" was not the question. I've got a first answer which again goes into the direction: "Hashes are generally a good idea" and out of that (not so wrong) thinking trying to rationalize the use of hashes with (IMHO) wrong arguments. A byte-by-byte compare doesn't need to do this. So under the assumption that I don't usually have a ready calculated and automatically updated table of all files hashes I need to calculate the hash and read every byte of duplicates candidates.

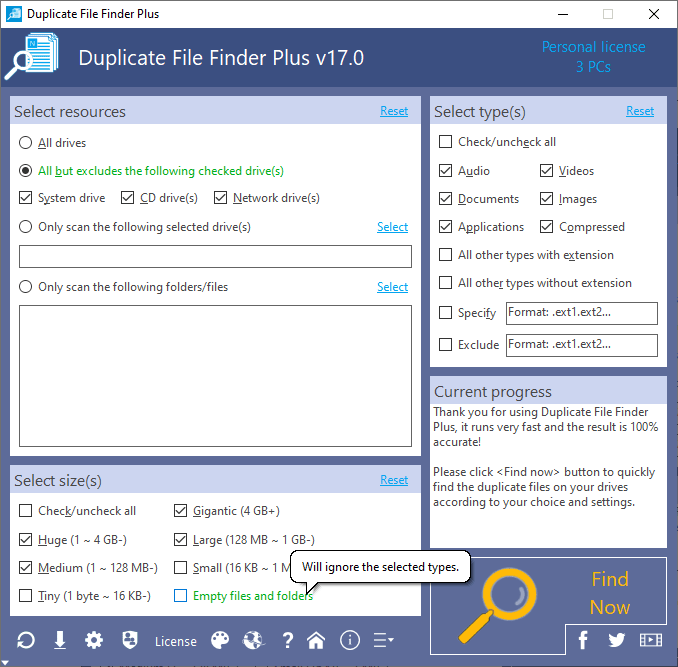

And there is the hash function which generates an ID out of so and so many bytes - lets say the first 10k bytes of a terabyte or the full terabyte if the first 10k are the same. So there a byte-by-byte-compare on the one hand, which only compares so many bytes of every duplicate-candidate function till the first differing position. But to generate a hash of a file the first time the files needs to be read fully byte by byte. But that seems to be a misconception out of the general use of "hash tables speed up things". There seems to be some opinion that hashing might be faster than comparing. I found one (Windows) app which states to be fast by not using this common hashing scheme.Īm I totally wrong with my ideas and opinion? a hash calculation is a lot slower than just byte-by-byte compare.by using a hash instead just comparing the files byte by byte we introduce a (low) probability of a false negative.duplicate candidates get read from the slow HDD again (first chunk) and again (full md5) and again (sha1).So I've got the opinion that this scheme is broken in two different dimensions: Another improvement is to first only hash a small chunk to sort out totally different files. Sometimes the speed gets increased by first using a faster hash algorithm (like md5) with more collision probability and second if the hash is the same use a second slower but less collision-a-like algorithm to prove the duplicates. same has means identical files - a duplicate is found.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed